Blog -

AWS Step Functions: Reducing a Long-Running Process by 75%

Initially, we relied on a Lambda-driven system to transfer large data sets every month so users could do their work. Internally, we call this process replication. Over time, the time required to complete replication grew to several days, and errors increased. In this post, I’ll demonstrate how AWS Step Functions help reduce errors, minimize processing time, and increase the scalability of your applications.

Where We Started — Challenges in Creating the Workflow

Creating a replication system brings unique challenges. We use AWS Lambda functions to build microservices because they offer many advantages, such as high scalability, cost-efficiency, and increased agility. A typical practice seen in data processing pipelines is using an “Orchestrator” Lambda to organize services into workflows. This is also called “Lambda Chaining,” where lambdas are used to invoke other Lambdas. Unfortunately, Lambdas have a maximum runtime of 15 minutes, making them less ideal for long-running tasks. Timeouts can occur as your data grows. If improperly handled, larger datasets may encounter unwanted cascading effects like partial operations, incomplete data, and internal errors.

Throttling errors are another challenge we faced. Cloud storage providers have varying usage limits and will prevent further requests after a certain threshold. Since all customers are replicated on the same day, requests must be managed to avoid disruptions.

We needed to address these issues, and thus, we started looking into new solutions.

The Solution: AWS Step Functions

AWS Step Functions simplify the development process of multi-step workflows by using state machines. They’re a better way to coordinate tasks and provide flexible options to customize a workflow to your business’s needs.

Here are some examples to choose from:

- Pass: Modify properties between states

- Task: Invoke different actions

- Parallel: Run multiple branches of logic simultaneously

- Map: Applying specific actions to a list of items

- Choice: Conditional paths based on input

- Wait: Add pauses to a workflow

You can do so much more, be sure to visit the AWS State Machine Patterns to dive deeper.

Some Real-World Examples

In the following examples, we’ll highlight the benefits of AWS Step Functions to achieve to following:

- Coordinate and run tasks sequentially, reliably, and efficiently.

- Control and manage the processing of data to prevent throttling.

- Provide higher visibility and real-time monitoring.

- Create a service that can scale to hundreds of concurrent workers.

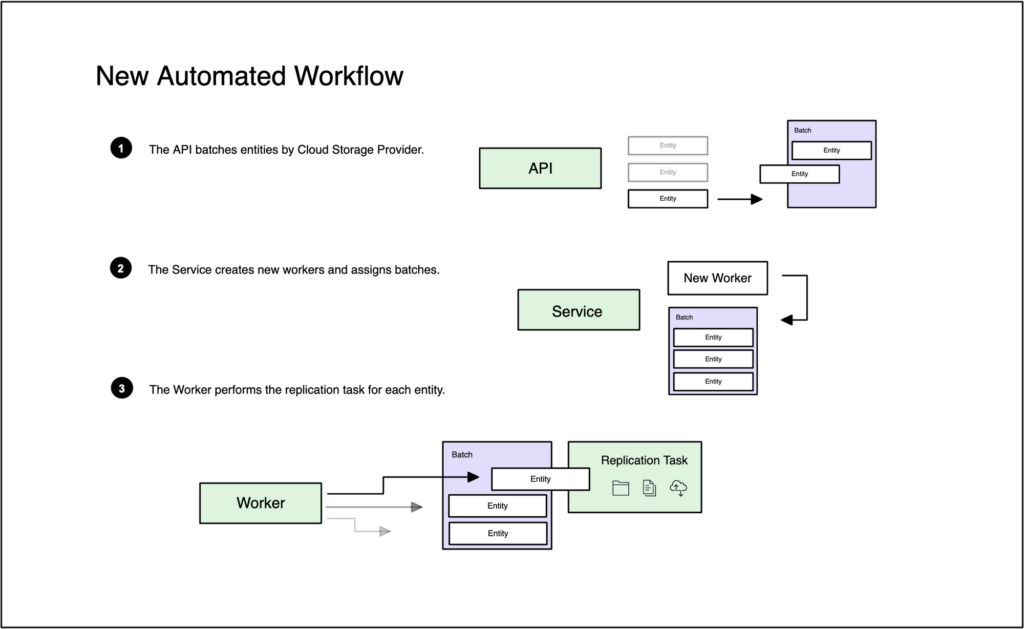

The new workflow automatically performs the replication process for customers at the start of the month.

Let’s do a walkthrough of the different components.

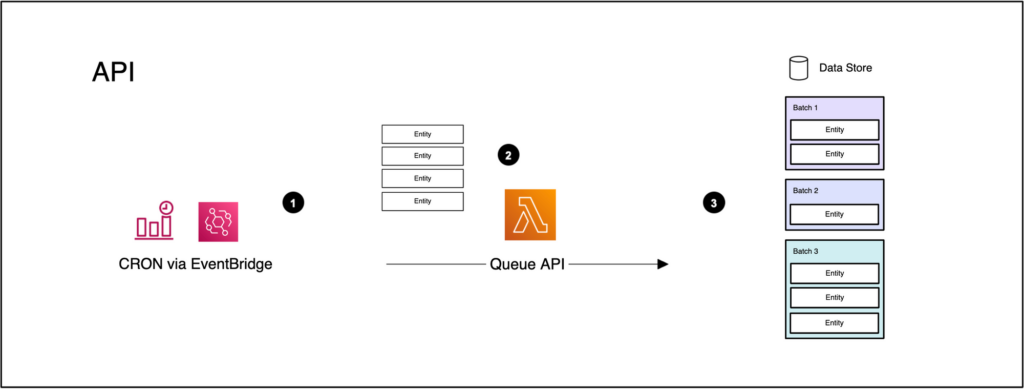

The API

The API is the entry point for the system and receives requests to replicate periods. It’ll group entities by storage provider to control and regulate tasks. This process automatically runs at the beginning of the month using Amazon EventBridge scheduled events.

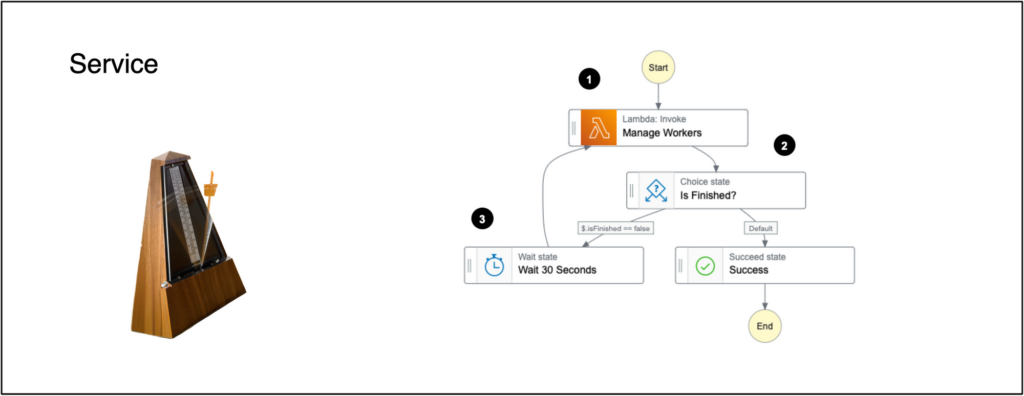

The Service

The service acts like a metronome, used by musicians to maintain tempo and timing. The Wait and Choice states are used here to mimic a pulse. This design pattern periodically runs actions to collect metrics and instantiate new workers for consistent throughput. This component is a central monitoring and control hub for the entire system.

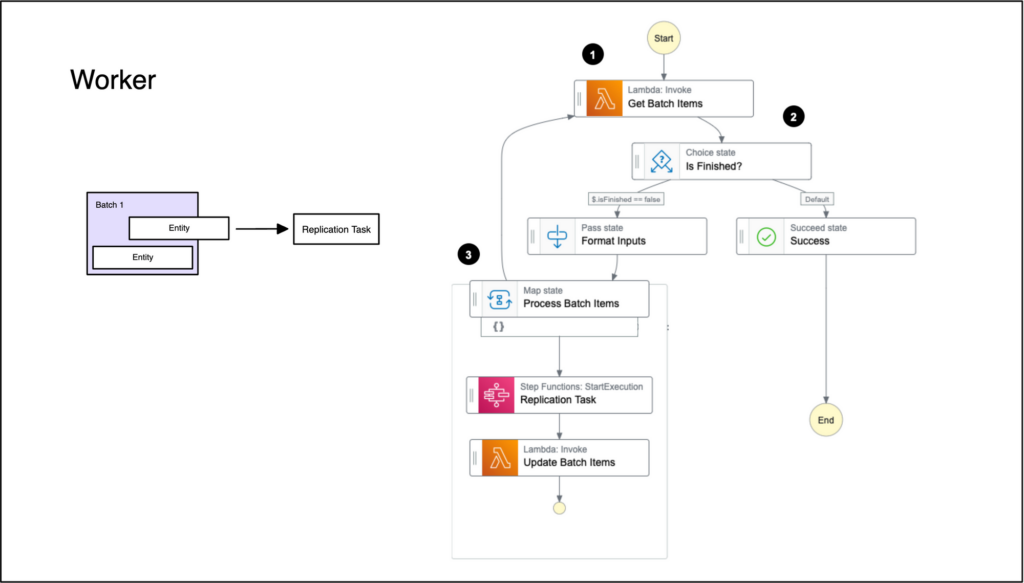

The Worker

Each worker is assigned a batch and will process entities sequentially to prevent throttling. This Step Function calls another Step Function, demonstrating the modularity of workflows through nesting.

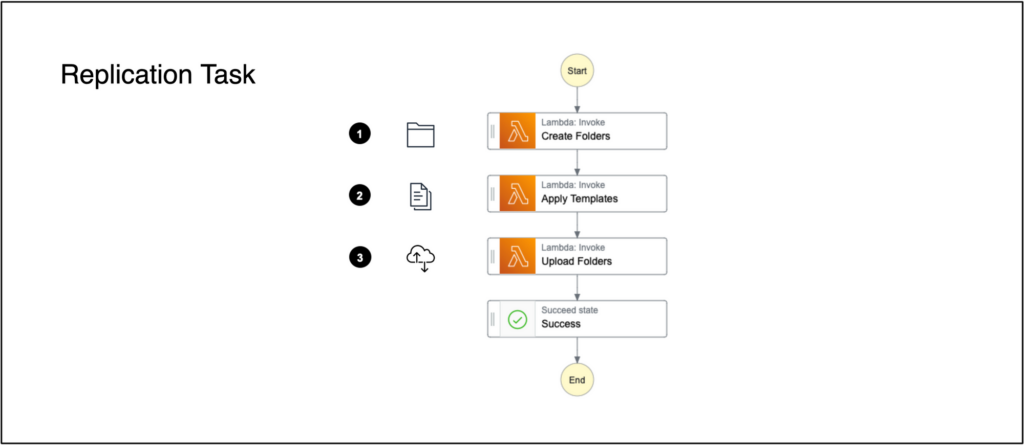

The Replication Task

The replication process can be broken down into 3 stages:

- Create Folders: Builds the folder tree structure for a new period.

- Apply Templates: Creates checklists and reconciliations with new due dates.

- Upload Folders: Syncs folders and documents to the user’s cloud storage provider.

The risk of timeouts is solved using small independent steps instead of a single long-running task.

Results and Outcome

With this new workflow, we successfully addressed the challenges previously faced. By breaking down the process into separate steps, we increased scalability and eliminated the risk of timeouts. This enables us to easily accommodate future growth and updates.

Through entity batching, we optimized the number of workers used, increasing throughput and reducing replication time by 75%. Previously, the system took 6 hours to complete, but now it only takes 1.5 hours. This significantly improved our efficiency and overall productivity.

Furthermore, grouping tasks by storage provider has effectively prevented API throttling. We saved extra processing time and minimized the number of errors. In fact, throttling previously accounted for 68% of the errors, but this was reduced to 2%.

Conclusion

This proves to be a more efficient and streamlined workflow. The improvements have resulted in tangible benefits, including increased efficiency, reduced errors, and minimized processing time. AWS Step Functions are a game-changer; there’s much more to explore, so I hope to have inspired you to try AWS Step Functions to build new and exciting workflows.

Back to Blog